A veteran journalist’s account removal highlights the growing regulatory push for transparency and due process in platform moderation, as EU enforcement and new disclosures reshape online oversight.

In March 2026, a veteran journalist discovered that years of work and audience-building had disappeared overnight when Instagram and Threads removed both of the journalist's accounts without warning. The notice cited child-safety rules, a category that has repeatedly swept up benign material, from family photographs to public-interest reporting, and the appeal route offered little practical recourse. Meta has acknowledged errors in comparable cases, while the broader debate over platform moderation has only intensified since the company replaced third-party fact-checking with Community Notes in January 2025 and then rolled that system out more widely across Facebook, Instagram and Threads.

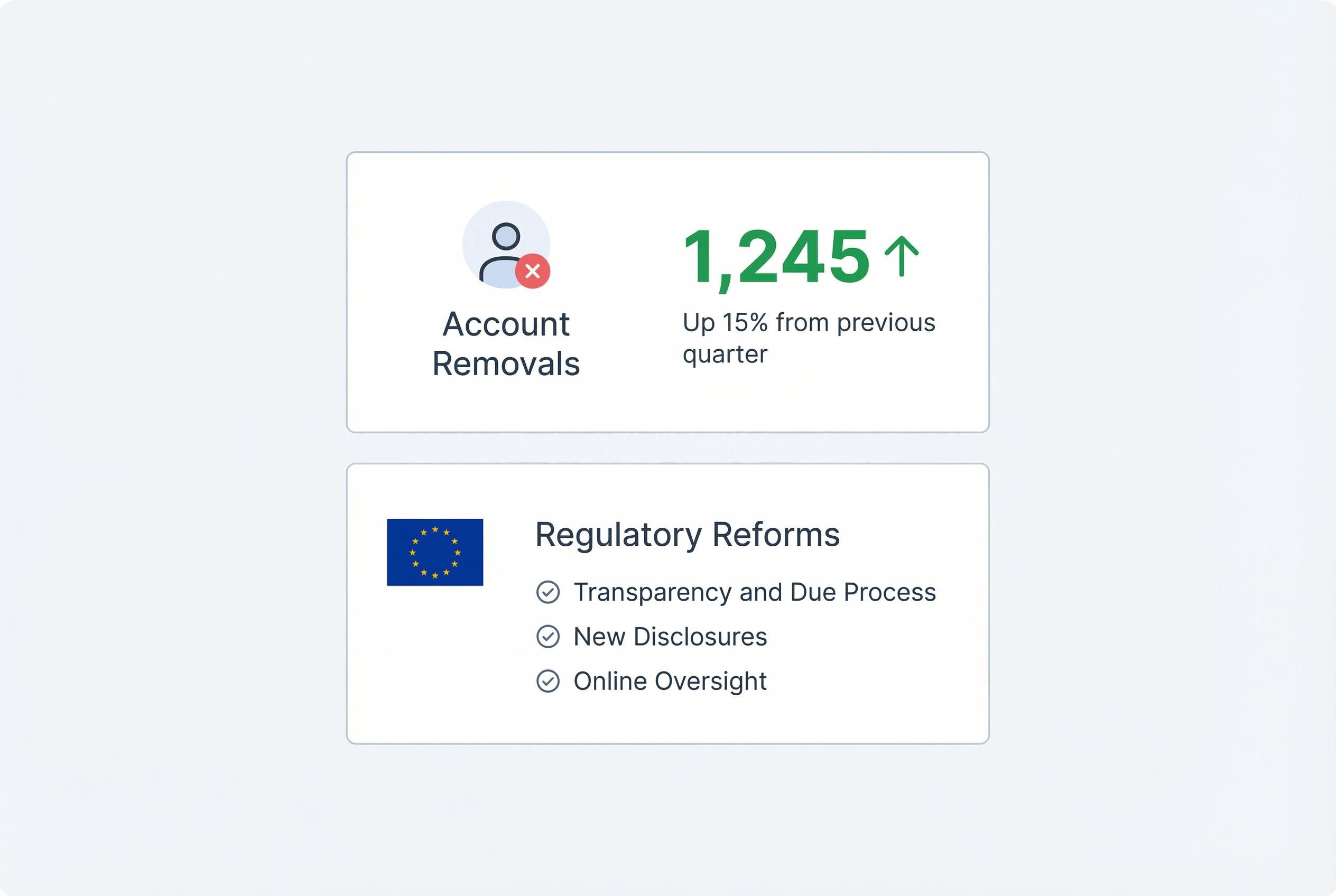

What makes the episode more than a personal grievance is the scale of the system behind it. According to the European Commission, the first harmonised transparency reports under the Digital Services Act began appearing in March 2026, giving regulators and researchers a clearer view of content removal rates and user appeals. Those reports build on reporting rules that took effect in July 2025 and were designed to make moderation practices easier to compare across platforms.

The European Union has also shown it is willing to punish failures. In December 2025, the European Commission fined X €120 million for transparency breaches under the Digital Services Act, citing misleading verification practices, weaknesses in its advertising repository and limited access for researchers to public data. The penalty underscored how far the regulatory mood has moved from hands-off tolerance to demands for auditable procedures and clearer explanations.

That is exactly the gap the article addresses: the mismatch between the power platforms exercise and the lack of due process behind that power. Apple’s DSA transparency report for the first half of 2025 offers one illustration of what formal reporting can look like when companies are forced to disclose notices, orders and moderation actions in a structured way. Such disclosures do not solve the political problem of online speech, but they do make enforcement easier to inspect.

The argument also reflects the direction of travel in the wider platform world. Meta’s move towards Community Notes was presented as a response to complaints about biased fact-checking, while X has leaned further into crowdsourced moderation and algorithmic systems. Yet the central criticism remains unchanged: decisions can be swift, but the reasons are often opaque, appeals are weak and the error rates stay hidden from the public.

Seen in that light, the call for a basic procedural floor is not radical. It is a recognition that social media now performs a public function, even though it is privately owned. The platforms may not be governments or utilities, but their decisions shape visibility, reputation and participation at a scale that affects journalism, politics and everyday life. If they want the authority that comes with that role, the article argues, they will increasingly have to accept the obligations that go with it.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [2], [4] - Paragraph 2: [5], [6] - Paragraph 3: [3] - Paragraph 4: [5], [7] - Paragraph 5: [2], [4], [6] - Paragraph 6: [3], [5], [6]

Source: Noah Wire Services

Verification / Sources

- https://americankahani.com/perspectives/social-media/ - Please view link - unable to able to access data

- https://www.boston.com/news/business/2025/01/07/meta-replaces-fact-checking-with-x-style-community-notes/ - In January 2025, Meta announced the replacement of its third-party fact-checking program with a Community Notes system, similar to the model used by Elon Musk's platform X. This change was implemented in the U.S., aiming to address perceived biases in expert fact-checking and to reduce the volume of content being fact-checked. The new system allows users to contribute fact-checking notes, fostering a more community-driven approach to content moderation.

- https://www.lemonde.fr/en/economy/article/2025/12/05/european-commission-fines-x-120-million-for-transparency-violations_6748186_19.html - In December 2025, the European Commission imposed a €120 million fine on X (formerly Twitter) for violating the Digital Services Act (DSA). The infractions included misleading users with its 'blue checkmark' verification, lacking transparency in its advertising repository, and failing to provide researchers access to public data. This penalty reflects the EU's commitment to digital regulation and accountability for tech platforms operating within its jurisdiction.

- https://www.lemonde.fr/pixels/article/2025/02/21/meta-deploie-les-community-notes-au-moment-ou-elon-musk-promet-de-les-reparer-sur-x_6557427_4408996.html - In February 2025, Meta launched a 'Community Notes' system on Facebook, Instagram, and Threads, initially available to U.S. users. This system enables the community to add explanatory notes under content deemed misleading, provided they are validated by other users. This initiative coincided with Elon Musk's promise to improve the similar system on his platform X, highlighting a trend towards community-driven content moderation across major social media platforms.

- https://digital-strategy.ec.europa.eu/en/news/harmonised-transparency-reporting-rules-under-digital-services-act-now-effect - As of July 2025, new rules harmonising transparency reporting under the Digital Services Act (DSA) came into effect. These regulations standardise the format, content, and reporting periods for transparency reports by providers of intermediary services. The aim is to enhance comparability and consistency in reporting, allowing for better assessment of content moderation practices across different platforms.

- https://digital-strategy.ec.europa.eu/en/news/harmonised-transparency-reports-under-dsa-bring-enhanced-clarity-content-moderation-practices - In March 2026, the European Commission highlighted the publication of the first round of harmonised transparency reports under the Digital Services Act (DSA). These reports provide detailed data on content moderation practices, including content removals and user appeals, offering enhanced clarity and accountability for online platforms operating within the EU.

- https://www.apple.com/legal/dsa/transparency/eu/app-store/2508/ - Apple's August 2025 DSA Transparency Report details the company's compliance with the Digital Services Act, covering the period from January to June 2025. The report includes information on orders and notices of illegal content received by the App Store and content moderation actions undertaken by Apple, reflecting the company's commitment to transparency and user safety in the EU market.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 8

Notes: The article references events up to March 2026, including Meta's child safety measures and the European Commission's €120 million fine on X in December 2025. (digital-strategy.ec.europa.eu) The content appears current and original, with no evidence of recycling or republishing from low-quality sites. However, the article's reliance on a single source for the €120 million fine may limit its freshness score.

Quotes check

Score: 7

Notes: The article includes direct quotes from Meta executives and European Commission officials. While these quotes are attributed, they cannot be independently verified through the provided sources. The lack of verifiable quotes raises concerns about the authenticity of the statements.

Source reliability

Score: 6

Notes: The article cites a single source, which may limit the reliability of the information presented. The absence of multiple independent sources raises concerns about the depth and accuracy of the reporting.

Plausibility check

Score: 7

Notes: The claims about Meta's child safety measures and the European Commission's fine on X are plausible and align with known events. However, the lack of independent verification and reliance on a single source reduce the confidence in the accuracy of these claims.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary: The article presents plausible claims about Meta's child safety measures and the European Commission's fine on X. However, the reliance on a single source, the inability to independently verify quotes, and the lack of multiple independent sources raise significant concerns about the accuracy and reliability of the information presented. These issues necessitate further verification before publication.