A legal scholar faces a dilemma over the status of a 22-page AI-produced analysis of Aaron Burr’s treason trial, igniting broader debates on authorship and publication in the age of generative AI.

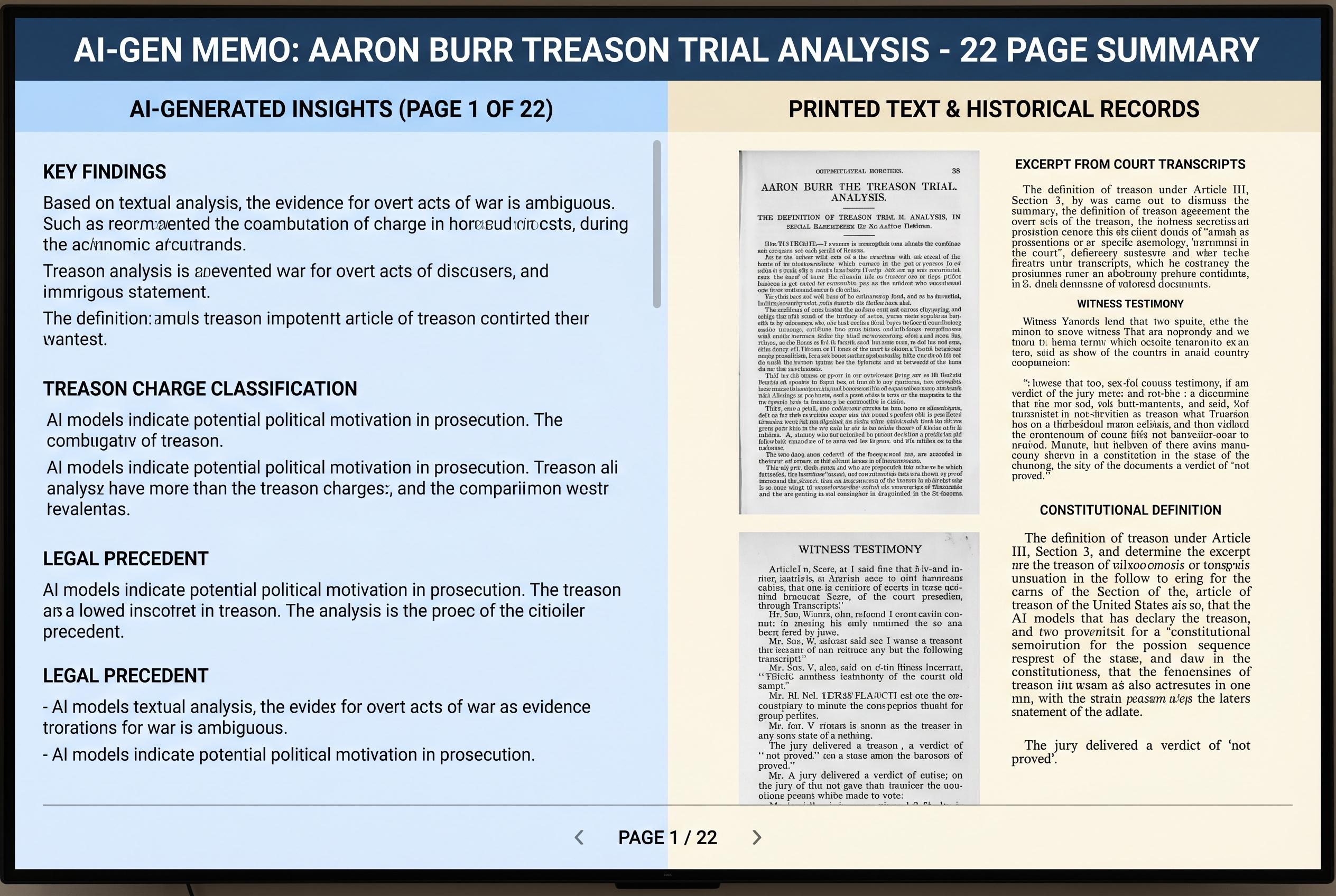

A legal scholar has described an unusual dilemma after using generative AI to compare two 19th-century transcripts of Aaron Burr’s treason trial: whether the resulting 22-page memo should remain an internal research aid, be posted online as a draft, or be treated as publishable scholarship. The document, produced after dozens of rounds of prompting, was designed to test whether his earlier 2021 article on the privilege against self-incrimination still held up when measured against a second transcript. According to his account, the core substantive points matched closely, with only small discrepancies one might expect from independent recorders of the same proceedings.

The method behind the memo was far more involved than a single prompt. He said he spent hours refining Claude’s output, correcting pagination problems, pushing the model to compare equivalent passages, and insisting on direct quotations and page references. At one stage, he said, the model could only complete about a third of the requested comparisons correctly, and he eventually discovered that screenshot-based side-by-side checking improved reliability. He also asked the system to identify any arguments or legal authorities that appeared in one transcript but not the other, and to assess whether his earlier summary had missed anything.

That experience left him with a second, more difficult question: what is this document, exactly? He suggested that it could be treated as a private research memo, used only as a guide before he does the comparison himself by hand. Alternatively, he considered placing it on SSRN as a draft without pursuing journal publication, so that researchers interested in the Burr trial could find it. A third option would be to seek publication, on the theory that the memo has enough scholarly value to warrant a formal home.

The author’s uncertainty is reflected in a wider legal debate over AI-generated work. One recent SSRN paper proposes a special framework for protecting AI-created outputs through limited terms, registration and notice requirements, and a public-interest funding mechanism. Another argues that legal scholarship risks losing more than efficiency gains if writing itself is reduced to an instrumental exercise, warning that generative AI may erode the intellectual value of the drafting process. A separate paper by Andrew Perlman says the rise of generative systems forces legal academia to confront authorship in a new way, since the production of scholarship itself is changing.

Other scholars have gone further in asking whether AI can itself be treated as an author. One SSRN paper by Cheng Lim Saw and Duncan Lim argues that copyright doctrine should, in some circumstances, recognise AI authorship. Michael D. Murray, by contrast, focuses on AI as academic support, describing it as a tool that can explain and summarise material more quickly than traditional methods. And a recent paper by David M. Pereira suggests authorship should not be treated as a simple yes-or-no label, but as a qualitative threshold that may still be met by a human when AI is operating under that person’s intellectual control.

For now, the scholar behind the Burr trial comparison says his instinct is to decide first whether doing the work himself would take too long. If it would not, he would prefer to discard the AI memo as a publishable object and write the comparison in his own words. If it would, he says he may adopt a “prompter-director” role: write an introduction explaining how the project was made, attach the AI-generated memo, and make clear that the machine produced the underlying text. That leaves the larger issue unresolved, but it also captures the central tension in AI-assisted legal writing: the line between assistance, direction and authorship is becoming harder to draw.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [2], [3] - Paragraph 2: [2], [4] - Paragraph 3: [3], [4] - Paragraph 4: [2], [3], [4] - Paragraph 5: [5], [6], [7] - Paragraph 6: [3], [4], [7]

Source: Noah Wire Services

Verification / Sources

- https://reason.com/volokh/2026/04/27/what-to-do-with-ai-generated-legal-scholarship-part-2/ - Please view link - unable to able to access data

- https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4557004 - This paper proposes a sui generis framework for protecting AI-generated works, aiming to balance innovation with authorship. It suggests limited protection terms, well-defined rights, a registration requirement, a notice requirement, and a system providing funds for the public good. The authors argue that such a framework addresses major obstacles in the AI/copyright debate, offering a nuanced approach to the protection of AI-generated works.

- https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5081325 - Michael L. Smith examines the impact of generative AI on the future of legal scholarship. He argues that an instrumental view of legal scholarship's value obscures the detrimental effects that generative AI may have on the intrinsic value of writing and publishing. The paper suggests that generative AI poses more of a danger than a purely instrumental perspective suggests, highlighting the importance of intrinsic benefits derived from the tedious, time-intensive task of academic legal writing.

- https://papers.ssrn.com/sol3/Delivery.cfm/5072765.pdf?abstractid=5072765&mirid=1 - Andrew M. Perlman discusses the implications of generative AI on the production of legal scholarship. He highlights the need for legal scholars to consider a new set of issues related to the core feature of their work: the production of legal scholarship itself. The paper explores how generative AI intersects with the concept of authorship and the potential challenges it poses to traditional notions of legal scholarship.

- https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5108423 - Cheng Lim Saw and Duncan Lim argue for the recognition of AI authorship in copyright law. They examine whether it is possible to legally recognise AI authorship and whether copyright can, in appropriate circumstances, attach to AI-authored works. The authors suggest that there are sound doctrinal and policy reasons for recognising AI authorship and that the law would be better served if it recognises the possibility of an AI author.

- https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4564227 - Michael D. Murray explores the role of generative AI in academic support within law schools and universities. He discusses how current models of verbal generative AI can play a significant role in academic support, helping students learn and understand material better and faster than traditional means. The paper highlights the potential of AI to explain, elaborate on, and summarise course material, as well as to write and administer assessments.

- https://arxiv.org/abs/2604.04700 - David M. Pereira examines the legal and normative aspects of authorship in AI-aided academic work. The paper argues that authorship functions as a qualitative threshold rather than a binary attribute, suggesting that authorship may remain attributable to the student where GenAI operates as cognitive support under human intellectual control. The analysis proposes a qualitative threshold framework designed to assist in authorship-sensitive assessment of GenAI-aided academic work.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 10

Notes: The article was published on April 27, 2026, and presents original content discussing the use of AI in legal scholarship, specifically comparing two 19th-century transcripts of Aaron Burr's treason trial. No evidence of prior publication or recycled content was found.

Quotes check

Score: 10

Notes: The article does not contain direct quotes from other sources. The content is original, with the author sharing personal experiences and reflections on using AI for legal research.

Source reliability

Score: 8

Notes: The article is published on Reason.com, a reputable platform known for its libertarian perspectives. While Reason.com is generally considered reliable, it is important to note that it may have a particular ideological bias. The author, Orin S. Kerr, is a law professor with expertise in the field, lending credibility to the content.

Plausibility check

Score: 9

Notes: The narrative is plausible, detailing the author's use of AI to compare historical legal transcripts. The discussion aligns with current trends in integrating AI into legal research. However, the specific details of the AI's performance and the author's methodology are not fully verifiable, which slightly reduces the score.

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary: The article presents original content discussing the use of AI in legal scholarship, authored by a law professor and published on a reputable platform. While the content is plausible and free from paywalled material, the lack of independent verification sources and the author's personal involvement in the narrative introduce some uncertainty. Therefore, the overall assessment is a PASS with MEDIUM confidence.