The EU’s temporary carve-out for scanning child sexual abuse material has lapsed, leaving tech giants in legal limbo and raising concerns over detection efforts in child protection.

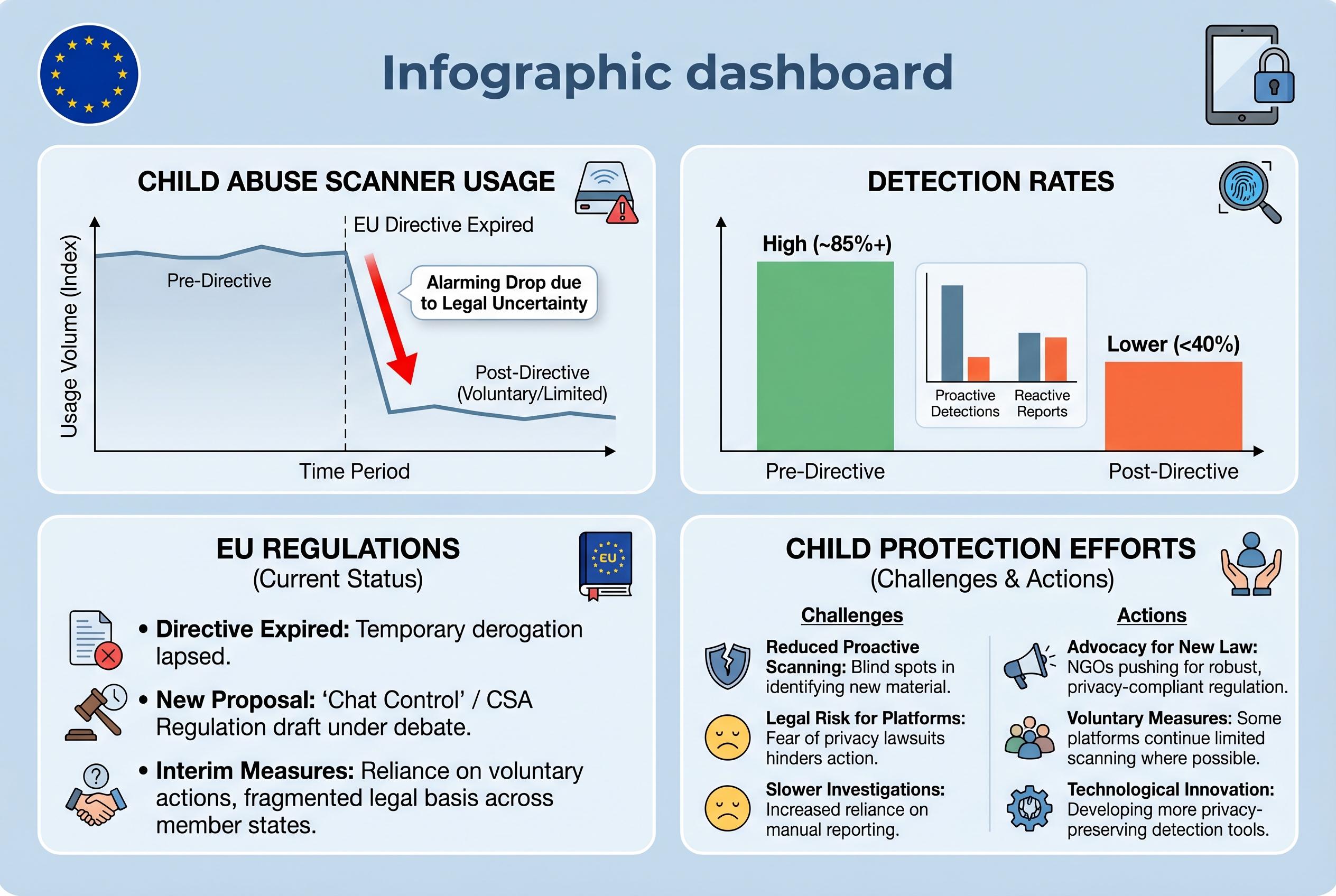

The European Parliament’s refusal to prolong a temporary exemption for scanning child sexual abuse material has opened a legal and political fault-line in Brussels, leaving major technology groups in a difficult position just as child-safety advocates warn that abuse could become harder to detect. The Guardian reported that the carve-out, introduced in 2021 as an interim measure under EU privacy rules, expired on 3 April after lawmakers declined to extend it, despite pressure from child protection organisations and the companies themselves. Google, Meta, Snap and Microsoft said they would keep scanning voluntarily for now, but the broader legal picture has become less certain.

The parliament’s move came after a messy few weeks in which its position shifted. Official European Parliament material shows that on 6 March MEPs backed a short extension of the exemption until August 2027, with safeguards intended to keep the measure targeted and proportionate. But by 27 March, lawmakers had rejected the extension in a vote that ended the chamber’s first reading on the proposal, with 228 in favour, 311 against and 92 abstentions. The result leaves a gap between the old temporary regime and any permanent law, even as negotiations continue on a wider framework to combat online child sexual abuse.

The stakes are high. According to the National Center for Missing and Exploited Children, it received 21.3 million reports in 2025 containing more than 61.8 million suspected abuse files from around the world, and about 90% of those reports related to countries outside the US. Child safety groups say detection tools are crucial because they generate reports that help investigators identify victims and offenders, including in cross-border cases. John Shehan of NCMEC warned that when detection is disrupted, “the abuse doesn’t stop”, a point echoed by advocates who fear the lapse will mean fewer referrals to law enforcement.

Privacy campaigners, however, have long argued that automated scanning of messages risks normalising surveillance and could produce false positives. The European Data Protection Supervisor said in February that any extension had to address weaknesses in the interim regime and avoid indiscriminate scanning, insisting on measures that are targeted and proportionate. The European Parliament has also said it is working on a permanent law, while the Council of the European Union adopted its own position in November 2025 on legislation that would impose duties on platforms to prevent the spread of abuse material and the solicitation of children, and would create an EU Centre on Child Sexual Abuse. For now, though, the temporary compromise has fallen away, and the long-promised replacement is still unfinished.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [2] - Paragraph 2: [3], [4] - Paragraph 3: [2], [5] - Paragraph 4: [3], [5], [6]

Source: Noah Wire Services

Verification / Sources

- https://www.theguardian.com/technology/2026/apr/09/google-meta-snap-microsoft-eu-child-sexual-abuse - Please view link - unable to able to access data

- https://www.theguardian.com/technology/2026/apr/09/google-meta-snap-microsoft-eu-child-sexual-abuse - The European Parliament has blocked the extension of a law that permitted major tech companies to scan for child sexual exploitation on their platforms. This decision has created a legal gap, raising concerns among child safety experts that crimes may go undetected. The law, which was a temporary measure allowing companies to use automated detection technologies to scan messages for harms, including child sexual abuse material (CSAM), expired on 3 April. The European Parliament decided not to vote to extend it, citing privacy concerns from some lawmakers. This regulatory gap has created uncertainty for big tech companies, as scanning for harms on their platforms is now illegal, yet they remain liable to remove any illegal content hosted on their platforms under a different law, the Digital Services Act. In response, Google, Meta, Snap, and Microsoft have stated they will continue to voluntarily scan their platforms for CSAM. The European Parliament has indicated that it is prioritising work on legislation to prevent and combat child sexual abuse online, with ongoing negotiations for a permanent legal framework, though no timeline for agreements or implementation has been provided.

- https://www.europarl.europa.eu/news/en/press-room/20260325IPR39207/ - On 27 March 2026, the European Parliament voted against extending an interim derogation from e-Privacy rules that allowed service providers to voluntarily detect child sexual abuse material (CSAM) online. The proposal was rejected with 228 votes in favour, 311 against, and 92 abstentions. This decision effectively closed the European Parliament's first reading on extending an existing derogation of the e-Privacy Directive. The purpose of the proposed extension was to continue temporary measures while negotiations continued on a long-term legal framework to prevent and combat child sexual abuse online. The Parliament's position, adopted on 11 March 2026, favoured extending the measures for a shorter period (until August 2027) than the Commission proposal and with a narrower scope to ensure the measures remained proportional and targeted.

- https://www.europarl.europa.eu/news/en/press-room/20260306IPR37531/child-sexual-abuse-online-support-for-extending-rules-until-august-2027 - On 6 March 2026, the European Parliament supported extending an exemption to privacy legislation that allows the voluntary detection of child sexual abuse material (CSAM) online until 3 August 2027. With 458 votes in favour, 103 against, and 63 abstentions, Members of the European Parliament (MEPs) endorsed a temporary extension of a current derogation of the e-Privacy Directive, which was due to expire on 3 April 2026. This extension was intended to allow time for an agreement on a long-term legal framework to prevent and combat child sexual abuse online. While supporting the extension, MEPs emphasised that the voluntary measures should remain proportional and targeted, should not apply to end-to-end encrypted communications, and should not involve scanning traffic data alongside content data.

- https://www.edps.europa.eu/press-publications/press-news/press-releases/2026/extension-interim-rules-to-combat-child-sexual-abuse-online-must-address-shortcomings-and-prevent-indiscriminate-scanning - On 17 February 2026, the European Data Protection Supervisor (EDPS) issued Opinion 7/2026 on the European Commission’s proposal to extend the application of Regulation (EU) 2021/1232, which pertains to interim rules regarding data processing for the purposes of combating child sexual abuse material (CSAM) online. The proposal sought to extend the interim framework, due to expire on 3 April 2026, until 3 April 2028. The EDPS underlined that while combating child sexual abuse is a serious and recognised objective of the Union, the extension must address shortcomings and prevent indiscriminate scanning. The EDPS highlighted the need for measures to be targeted, proportionate, and limited to what is necessary, ensuring that fundamental rights are safeguarded.

- https://www.consilium.europa.eu/en/press/press-releases/2025/11/26/child-sexual-abuse-council-reaches-position-on-law-protecting-children-from-online-abuse/ - On 26 November 2025, the Council of the European Union reached a position on a regulation to prevent and combat child sexual abuse. The new law, once adopted, will impose obligations on digital companies to prevent the dissemination of child sexual abuse material and the solicitation of children. Competent national authorities will have the power to oblige companies to remove and block access to such content or, in the case of search engines, delist search results. The regulation also establishes a new EU agency, the EU Centre on Child Sexual Abuse, to support member states and online providers in implementing the law. The Council's position includes making permanent a currently temporary measure that allows companies to voluntarily scan their services for child sexual abuse material, even though this exemption is due to expire on 3 April 2026.

- https://www.cereport.eu/news/politics/90414 - On 12 March 2026, the European Parliament supported extending an exemption to privacy legislation that allows the voluntary detection of child sexual abuse material (CSAM) online until 3 August 2027. With 458 votes in favour, 103 against, and 63 abstentions, Members of the European Parliament (MEPs) endorsed a temporary extension of a current derogation of the e-Privacy Directive, which was due to expire on 3 April 2026. This extension was intended to allow time for an agreement on a long-term legal framework to prevent and combat child sexual abuse online. While supporting the extension, MEPs emphasised that the voluntary measures should remain proportional and targeted, should not apply to end-to-end encrypted communications, and should not involve scanning traffic data alongside content data.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 8

Notes: The article was published on 9 April 2026, reporting on the European Parliament's decision not to extend the interim derogation for detecting child sexual abuse material online, which expired on 3 April 2026. The content is current and addresses recent legislative developments.

Quotes check

Score: 7

Notes: The article includes direct quotes from major technology companies expressing concern over the EU's decision. However, without access to the original statements from these companies, the accuracy and context of these quotes cannot be independently verified.

Source reliability

Score: 9

Notes: The Guardian is a reputable news organisation known for its investigative journalism. While it is a major news outlet, the article's reliance on statements from the companies involved and the European Parliament's press releases suggests a need for cross-referencing with primary sources to ensure accuracy.

Plausibility check

Score: 8

Notes: The article's claims align with known legislative processes and recent events concerning the EU's handling of child sexual abuse material detection. However, the assertion that the lapse will lead to undetected crimes is speculative and lacks direct evidence.

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary: The article provides current information on the European Parliament's decision not to extend the interim derogation for detecting child sexual abuse material online. While the source is reputable, the reliance on statements from the involved parties and the European Parliament's press releases, without independent verification from neutral third-party sources, introduces some uncertainty. The speculative nature of the article's claims about the impact of the legislative lapse further affects confidence in its accuracy. Therefore, the overall confidence in the article's reliability is medium.