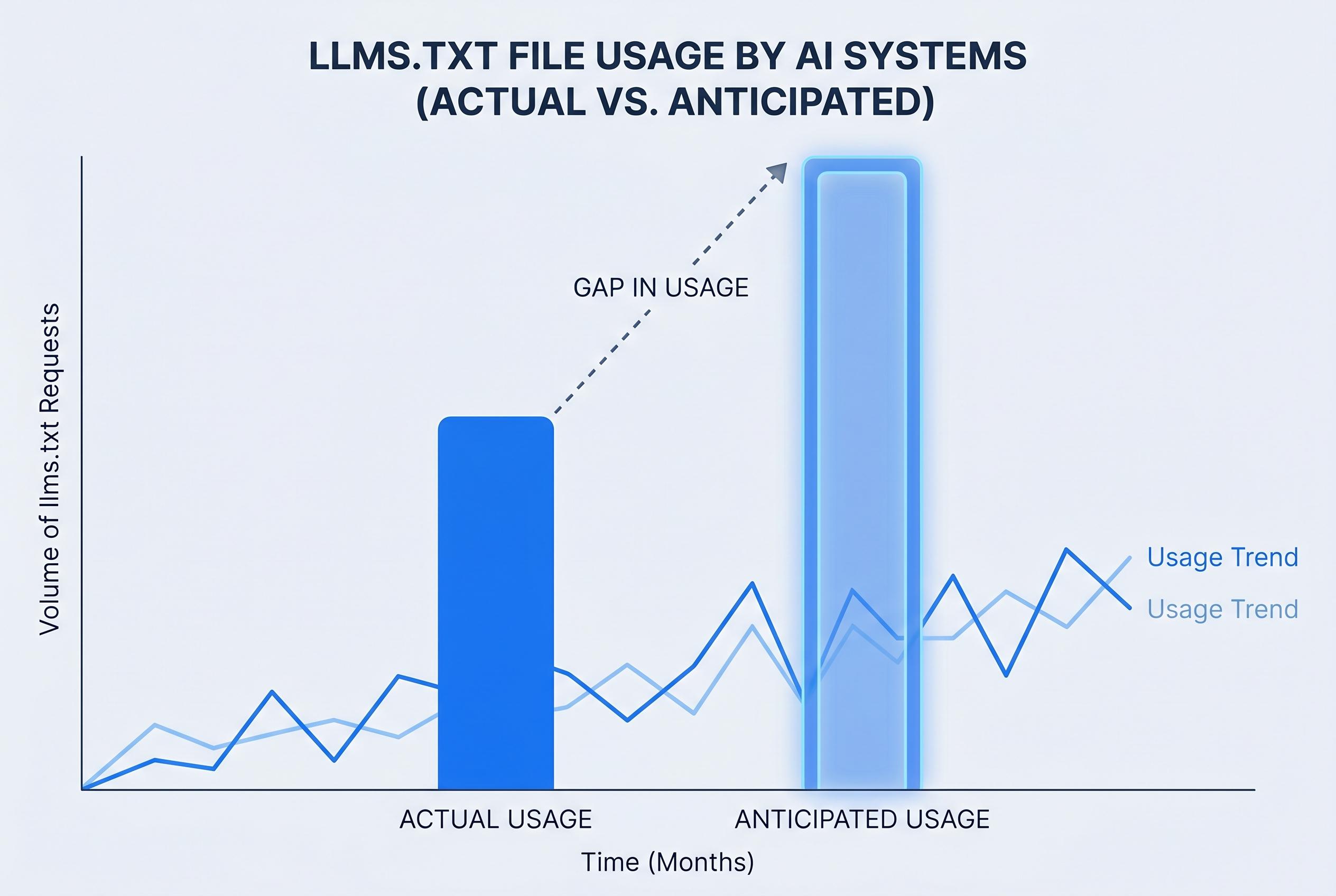

A recent audit reveals that major AI systems are not using llms.txt files as widely as anticipated, raising questions about its current effectiveness and adoption in AI and SEO practices.

The idea of an llms.txt file has attracted far more attention than evidence. An audit by Flavio Longato, who works in LLM optimisation and SEO at Adobe, found no visits at all from GPTBot, ClaudeBot or PerplexityBot across 1,000 domains over a 30-day period. Instead, the bulk of requests came from Google’s desktop crawler, with a small number from BingBot and OpenAI’s search bot, while SEO tools also made up a noticeable share of the traffic.

That matters because llms.txt is still only a proposed standard, not an agreed one. The file is intended to sit in a site’s root directory as a Markdown guide to a website’s key pages, giving machines a cleaner map of important content than a normal HTML page. But the central promise of the format is that AI systems would use it, and the available evidence does not show that happening.

Longato’s audit is not the only signal pointing in that direction. A live experiment reported by Complete SEO, based on an opt-in WordPress plugin, also found that major AI crawlers were not reading llms.txt files. Semrush has similarly noted that the format remains unadopted by the major AI companies, and that while conventional search crawlers may fetch the file, the dedicated AI bots have shown little interest.

The distinction is important. Googlebot and Bingbot are built to crawl broadly, so they will often request any discoverable file on a site, llms.txt included. That does not mean the file is being treated as a meaningful signal. In practice, the traffic seen in logs appears to reflect ordinary crawler behaviour, not support for a new AI-SEO standard.

For site owners, that leaves llms.txt in an awkward position. It is unlikely to cause harm, and it can be generated automatically with little effort, but there is no strong evidence that it improves visibility in AI answers, speeds indexing or replaces structured data. If a team wants to publish one as a low-cost experiment, that is one thing. Building a strategy around it is another.

The more reliable work is much less glamorous. Clean semantic HTML remains fundamental, because both search engines and AI systems still have to parse the page they are given. Proper use of elements such as article, section, nav and main makes content easier to interpret than a site built from generic div containers and styling hooks.

Structured data is another area where the case is stronger. Schema.org markup in JSON-LD format is already supported by major search engines and is far more established than llms.txt. For articles, guides, FAQs and product pages, it gives machines information in a form they can already use. Metadata such as title tags, descriptions and canonical URLs also remains essential.

Visual content is often the weakest part of the experience for both crawlers and language models. Images without meaningful alt text, and video without transcripts, create gaps that no root-level text file can fix. If AI systems are to quote, summarise or recommend content accurately, they need the surrounding context that semantically rich pages and transcripts provide.

The same applies to discoverability. A well-maintained sitemap.xml still does a better job of listing a site’s important URLs than llms.txt, and robots.txt remains the real mechanism for controlling crawler access. OpenAI, Anthropic and Perplexity all document their bots, and site owners can already decide what those crawlers may or may not reach.

Seen in that light, llms.txt looks less like a breakthrough and more like a useful test case for the gap between hype and adoption. It is a neat idea, but the available logs suggest that the real users of the file today are SEO tools and ordinary crawlers, not the AI systems it was meant to guide. For now, the practical answer is simple: if you have time to spare, generate one automatically; if not, invest the effort where the evidence is stronger.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [2] - Paragraph 2: [4] - Paragraph 3: [3], [4] - Paragraph 4: [2], [4] - Paragraph 5: [2], [7] - Paragraph 6: [4], [7] - Paragraph 7: [1] - Paragraph 8: [1], [6] - Paragraph 9: [5], [1] - Paragraph 10: [1], [2]

Source: Noah Wire Services

Verification / Sources

- https://habr.com/ru/articles/1027740/?utm_source=habrahabr&utm_medium=rss&utm_campaign=1027740 - Please view link - unable to able to access data

- https://www.longato.ch/llms-recommendation-2025-august/ - In August 2025, Flavio Longato conducted an audit of 1,000 Adobe Experience Manager domains over 30 days to assess the usage of llms.txt files by AI crawlers. The findings revealed that major AI crawlers, including GPTBot, ClaudeBot, and PerplexityBot, did not access the llms.txt files at all. Instead, Google's desktop crawler accounted for approximately 95% of all hits, while BingBot made seven requests, primarily on a single domain. OpenAI's search bot, OpenAIBotSearch, made ten requests, and SEO tools like Semrush and Ahrefs also generated significant traffic, though unrelated to LLMs. This audit challenges the effectiveness of llms.txt as a standard for AI SEO optimization.

- https://completeseo.com/are-ai-bots-actually-reading-llms-txt-files/ - A live experiment tracked whether major AI bots, such as GPTBot, ClaudeBot, and PerplexityBot, were accessing llms.txt files across the web. The experiment involved monitoring AI crawler behavior via opted-in users of an llms.txt WordPress plugin. The results indicated that AI crawlers were not accessing the llms.txt files, suggesting that these bots are not currently utilizing this file for AI-specific permissions and content rules. This finding aligns with previous audits that found minimal engagement with llms.txt by AI crawlers.

- https://www.semrush.com/blog/llms-txt/ - An article from Semrush discusses the current state of llms.txt, a proposed standard for guiding AI crawlers like GPTBot and PerplexityBot. The article highlights that llms.txt is still a proposed standard and is not yet widely adopted by major AI companies. It notes that none of the major LLM companies, including OpenAI, Google, or Anthropic, have officially stated they are following these files when they crawl websites. The article also mentions that while Googlebot and Bingbot have accessed llms.txt files, AI crawlers have not shown significant interest, indicating that the file is not yet a standard for AI SEO optimization.

- https://getmentio.io/en/blog/gptbot-claudebot-perplexitybot-access-configuration - This guide provides information on configuring access for AI crawlers GPTBot, ClaudeBot, and PerplexityBot. It explains that all three AI crawlers can be completely blocked through the robots.txt file using specific commands. The guide also discusses the differences in scanning strategies among these crawlers, noting that PerplexityBot is the least aggressive and focuses on authoritative domains, ClaudeBot automatically ignores paid pages, and GPTBot scans most actively for training future models. The guide emphasizes the importance of managing AI crawler access through robots.txt and llms.txt files for precise control.

- https://pressonify.ai/learn/ai-crawler-audit - An AI Crawler Audit guide that helps website owners optimize their sites for 11 major AI crawlers, including GPTBot, ClaudeBot, and PerplexityBot. The guide covers configuring robots.txt, llms.txt, sitemap-ai.xml, and verifying that AI crawlers can access content. It emphasizes the importance of auditing for AI crawlers in addition to traditional search engine crawlers, as AI crawlers are becoming increasingly important for content visibility. The guide provides practical steps for auditing and optimizing websites to ensure they are discoverable by AI crawlers.

- https://reaudit.io/blog/llms-txt-guide-complete-tutorial - A comprehensive guide on llms.txt, explaining what it is, how to create one, and why it matters for AI search. The guide discusses the purpose of llms.txt as a proposed standard for guiding AI crawlers to important content on a website. It provides a checklist for creating an llms.txt file, including deciding on featured content, creating the file in Markdown format, verifying the file returns a 200 response, and monitoring server logs for AI bot access. The guide also addresses common questions about llms.txt, such as its impact on Google rankings and whether it can be generated automatically.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 3

Notes: The article references an August 2025 audit by Flavio Longato, which is over seven months old. The most recent source cited is from March 2026, indicating that the content may be outdated. The article was published on April 25, 2026, but the information it presents is not current. This raises concerns about the freshness of the information provided.

Quotes check

Score: 2

Notes: The article includes direct quotes from Flavio Longato's audit. However, these quotes are not independently verifiable through other sources. The absence of corroborating sources for these quotes diminishes their reliability.

Source reliability

Score: 4

Notes: The primary source is an article from Habr, a Russian tech community platform. While Habr is known for its technical content, it is not a mainstream news outlet, which may affect the perceived reliability of the information. Additionally, the article relies heavily on a single audit by Flavio Longato, which may not be representative of broader trends.

Plausibility check

Score: 5

Notes: The claims about the lack of AI crawler activity on llms.txt files are plausible, given that major AI companies have not officially adopted the llms.txt standard. However, the article does not provide sufficient evidence to fully support these claims, and the reliance on a single audit raises questions about the generalizability of the findings.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): HIGH

Summary: The article presents outdated information, relies on unverifiable quotes, and lacks independent verification from reputable sources. Its content type is more opinion-based than factual reporting, further diminishing its suitability for publication. Given these issues, the article does not meet the necessary standards for factual reporting.