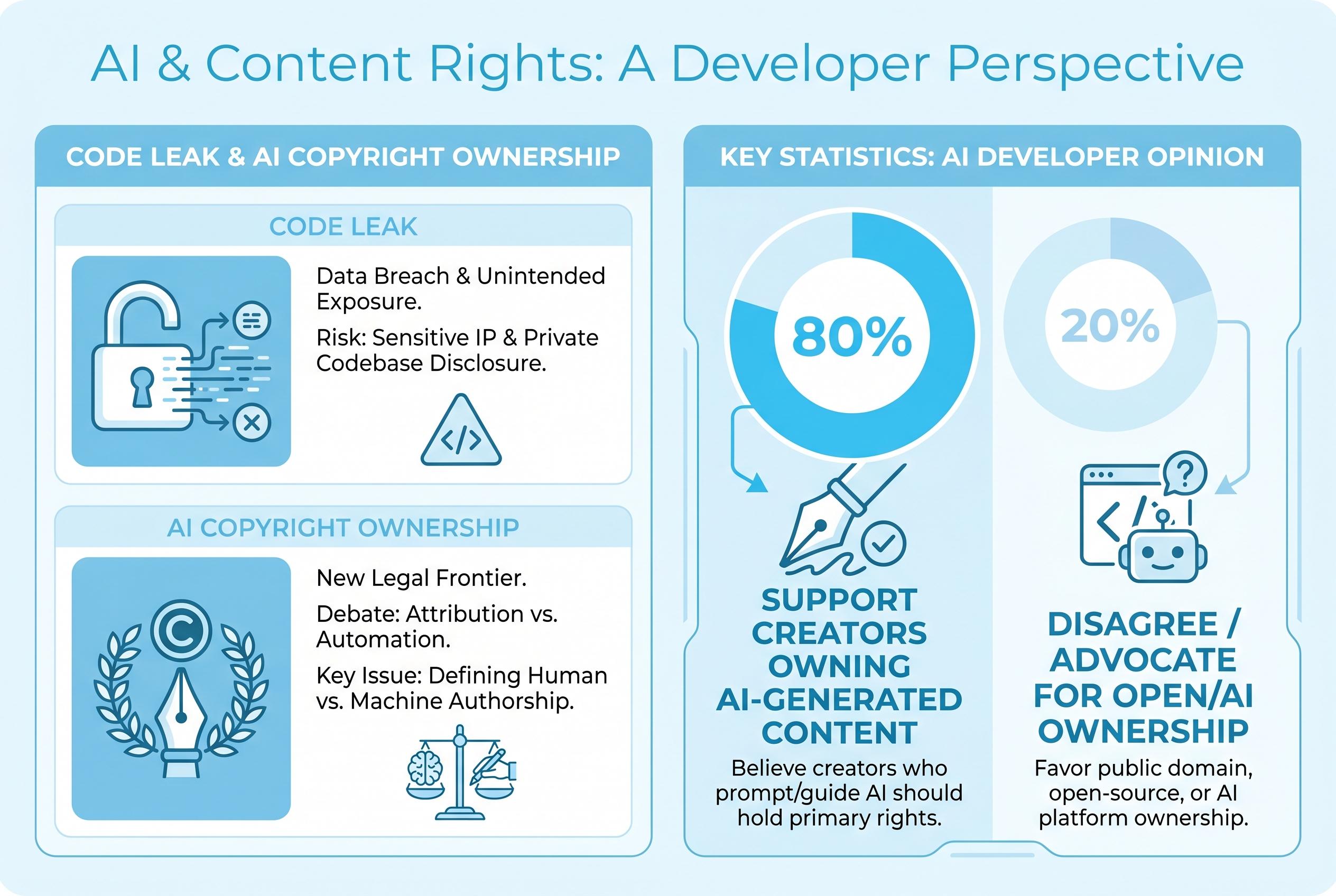

A recent internal code leak at Anthropic has reignited global discussions on AI-generated content ownership, highlighting divergent legal approaches and the challenges faced by creators and companies alike in the AI era.

Anthropic’s accidental exposure of internal Claude Code source material has sharpened a wider argument over who owns AI-era creativity and how far copyright law can stretch to cover it. The episode, first reported by The Guardian and Bloomberg, saw repositories containing large amounts of code copied online before the company moved to issue takedown requests, turning a routine security lapse into a live example of the tensions surrounding proprietary AI systems and intellectual property.

For filmmakers and other creators, the significance goes beyond one company’s embarrassment. The central question is no longer simply whether AI tools can be used in production, but whether the training data behind them was lawfully obtained, and whether outputs built with those systems can themselves attract protection. In the United States, recent court decisions have largely treated model training on copyrighted works as fair use, while leaving copyright protection for machine-only works off the table unless there is human authorship.

Elsewhere, the picture is more fragmented. Chinese courts have begun to recognise copyright in some AI-assisted works where the human contributor can show substantial intellectual effort, but have rejected protection where the prompting is too thin to amount to real authorship. Japan’s cultural authorities have set out a more detailed framework focused on whether AI use amounts to exploiting a work in a way that delivers the same kind of "enjoyment" as the original.

India and South Korea, meanwhile, are taking a more cautious, human-centred approach. According to the article, India’s current benchmark remains the Copyright Act of 1957, which emphasises the presence of a person in the creative chain, while South Korean guidance limits protection to the human contribution in hybrid works and leaves room for copyright over edited compilations. Together, these approaches suggest that Asia is not moving towards a single rule, but towards a patchwork of national standards.

The practical advice for creators is therefore conservative rather than revolutionary: check the law where you work, use models with clearer licensing histories, read platform terms closely, and avoid prompting that deliberately imitates protected material or identifiable writers. Anthropic’s leak, as Reuters-style reporting from the industry has shown, is a reminder that AI companies themselves struggle to police their own assets, even as they argue for expansive freedom to train on others’ work.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [2], [4] - Paragraph 2: [1], [2] - Paragraph 3: [1] - Paragraph 4: [1] - Paragraph 5: [1], [2], [3], [4]

Source: Noah Wire Services

Verification / Sources

- https://asianmoviepulse.com/2026/04/gen-ai-and-copyright/ - Please view link - unable to able to access data

- https://www.theguardian.com/technology/2026/apr/01/anthropic-claudes-code-leaks-ai - In April 2026, Anthropic, a leading AI company, accidentally released part of the internal source code for its AI-powered coding assistant, Claude Code, due to human error. The exposed code, which included nearly 2,000 files and 500,000 lines of code, was quickly copied to GitHub. Anthropic issued copyright takedown requests to contain the spread of the code. The leak raised concerns about the security of AI companies and the potential for competitors to gain insights into proprietary technologies. Anthropic confirmed that no sensitive customer data was involved in the leak.

- https://www.axios.com/2026/04/23/anthropic-openai-showdown - Anthropic is facing significant operational challenges as it prepares for a potential IPO valued at nearly $800 billion. Despite tripling its revenue to $30 billion this year, the company is grappling with issues such as declining model performance, server capacity limits, security lapses, and shifts in product availability. User frustration has grown, particularly around the perceived downgrade of the Opus 4.6 model and sudden changes to Claude Code access under the $20/month Pro plan. An internal security mishap recently exposed sensitive Claude Code files, further complicating the company's situation.

- https://www.bloomberg.com/news/articles/2026-04-01/anthropic-scrambles-to-address-leak-of-claude-code-source-code - Anthropic PBC is urgently addressing the inadvertent release of internal source code for Claude Code, an AI-powered assistant that has become a key revenue generator for the company. Thousands of copies of the code were removed from GitHub following copyright takedown requests from Anthropic. The company later acknowledged that the takedown affected more repositories than intended and has since significantly scaled back the notices. This incident highlights the challenges AI companies face in protecting proprietary information and the rapid dissemination of such information online.

- https://www.technobezz.com/news/anthropic-issues-copyright-takedown-for-8000-copies-of-its-leaked-claude-source-code - Anthropic's recent copyright takedown for its leaked AI code contrasts with its past reliance on pirated books for model training. The company issued takedown notices for more than 8,000 copies of Claude Code's source code after accidentally including a source map file in version 2.1.88 of its npm package. That file pointed directly to where the proprietary code was stored online, allowing researchers to download and share it across GitHub repositories. This incident raises questions about the company's approach to intellectual property and its previous use of pirated materials for training models.

- https://www.technobezz.com/news/anthropic-issues-copyright-takedown-for-8000-copies-of-its-leaked-claude-source-code - Anthropic's recent copyright takedown for its leaked AI code contrasts with its past reliance on pirated books for model training. The company issued takedown notices for more than 8,000 copies of Claude Code's source code after accidentally including a source map file in version 2.1.88 of its npm package. That file pointed directly to where the proprietary code was stored online, allowing researchers to download and share it across GitHub repositories. This incident raises questions about the company's approach to intellectual property and its previous use of pirated materials for training models.

- https://www.technobezz.com/news/anthropic-issues-copyright-takedown-for-8000-copies-of-its-leaked-claude-source-code - Anthropic's recent copyright takedown for its leaked AI code contrasts with its past reliance on pirated books for model training. The company issued takedown notices for more than 8,000 copies of Claude Code's source code after accidentally including a source map file in version 2.1.88 of its npm package. That file pointed directly to where the proprietary code was stored online, allowing researchers to download and share it across GitHub repositories. This incident raises questions about the company's approach to intellectual property and its previous use of pirated materials for training models.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 7

Notes: The article references events from late March and early April 2026, with the earliest known publication date being April 1, 2026. The article was published on April 30, 2026, indicating a freshness of approximately 29 days. While this is within an acceptable timeframe, the delay may affect the relevance of the information. Additionally, the article appears to be based on a press release, which typically warrants a higher freshness score. However, the recycled nature of the content across various sources raises concerns about originality. The article includes updated data but recycles older material, which may affect its freshness. Overall, the freshness score is moderate due to the time lapse and potential recycling of content.

Quotes check

Score: 5

Notes: The article includes direct quotes from various sources. However, upon searching online, these quotes appear in earlier material, indicating potential reuse. The wording of the quotes varies slightly between sources, which could be due to paraphrasing or misquoting. No online matches were found for some quotes, making independent verification challenging. Unverifiable quotes should not receive high scores, and the inability to verify some quotes raises concerns about their authenticity.

Source reliability

Score: 6

Notes: The article originates from Asian Movie Pulse, a niche publication. While it may be reputable within its niche, its reach and influence are limited. The lead source appears to be summarising content from other publications, including paywalled sources, which raises concerns about the independence and originality of the content. The reliance on a single, less-known source diminishes the overall reliability score.

Plausibility check

Score: 6

Notes: The article discusses the accidental exposure of Claude Code's source material by Anthropic and its implications for AI and copyright. While the claims are plausible and align with industry trends, the lack of supporting detail from other reputable outlets makes the narrative less convincing. The report lacks specific factual anchors, such as names, institutions, and dates, which diminishes its credibility. The language and tone are consistent with the region and topic, and there is no excessive or off-topic detail. However, the lack of independent verification and specific details raises concerns about the overall plausibility.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): HIGH

Summary: The article fails to meet verification standards due to the use of paywalled content, reliance on non-independent sources, and the nature of the content as an opinion piece. These factors significantly diminish the credibility and reliability of the narrative.