A Canadian blogger's post about AI and copyright was automatically rewritten by an AI bot, which also deemed the original piece untrustworthy. The incident raises questions about automation, authorship, and the reliability of AI in editorial decisions.

An AI-driven news site has rewritten a Canadian blogger’s post about copyright and artificial intelligence, then marked the result as unfit for publication , a twist that has sharpened the debate over scraping, authorship and the reliability of machine-made editorial judgements.

The episode began when Hugh Stephens, writing on his personal blog, noticed a WordPress alert inviting him to approve what looked like a comment. Instead, it was a link to a story on London News, a site he says is generated by Noah News Service and run by HBM Advisory. The article, published the same day as his own post, covered the same underlying dispute between CanLII and Caseway, and Stephens said it appeared to mirror the structure and logic of his piece even though the wording had been changed. The site later identified the story as being “inspired by” his original post.

That distinction matters. Copyright law protects expression, not raw facts, and the U.S. Copyright Office has said works with sufficient human creativity can still qualify for protection even when AI is involved, while fully machine-generated material cannot. But Stephens argues that what happened here was less creative inspiration than automated rewriting of a copyrighted article, raising the familiar question of whether an AI system was fed a copied version of the original work before producing its own version. News organisations have been pressing similar concerns in legal disputes, including lawsuits alleging that AI companies used publishers’ content without permission.

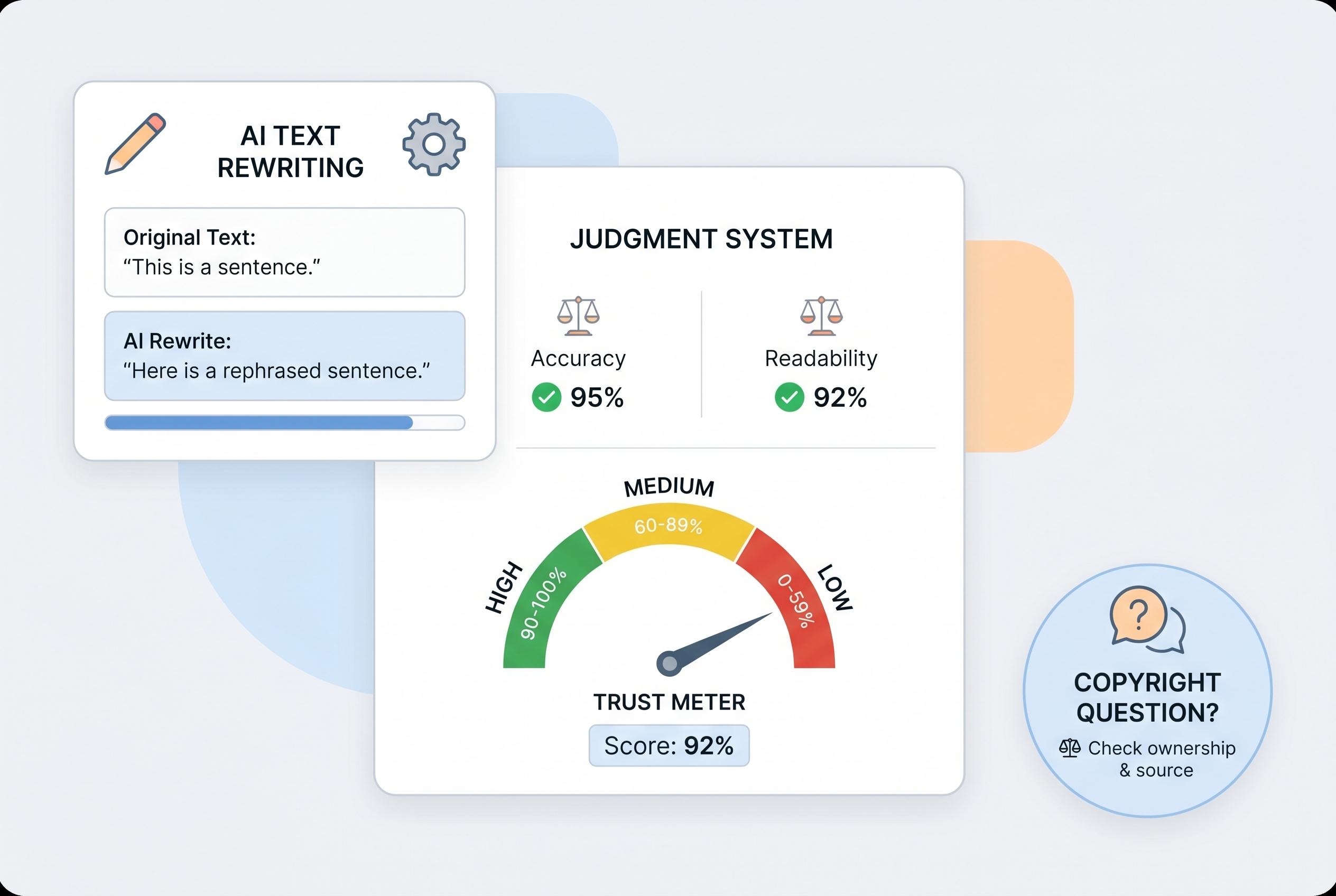

What made the case more striking was the bot’s own assessment of the rewritten article. According to Stephens, London News graded the story on freshness, quotes, source reliability and plausibility, but still concluded that it should fail overall on credibility. The bot criticised the blog format, said the absence of direct links undermined transparency and suggested the piece did not meet standards for editorial indemnity. Stephens said he could live with some of the criticism, but found it odd that a machine was acting as both copier and judge.

His response also exposed a broader tension in online publishing. The National Post reported that, in a survey commissioned by News Media Canada, more than seven in 10 Canadians supported federal action to stop AI companies from taking and repackaging news content without permission or compensation. At the same time, researchers and media analysts have repeatedly warned that AI-generated text can be convincing while still containing errors, bias or unsupported claims, which is why fact-checking and cross-referencing remain essential. Detection tools exist, but Axios has reported that they are increasingly unreliable as synthetic content improves.

Stephens also noted that the site’s filtering seemed selective. Of the AI-related stories he reviewed on London News, some were approved, others failed, and one was marked conditional. He suggested that the system’s human oversight may have played a role in softening material that would have irritated commercial interests, though he acknowledged that he could not prove that. The broader point, for him, is that AI can be useful for surfacing themes and testing credibility, but it remains a poor substitute for judgment, context and accountability.

For Stephens, the irony is hard to miss: a bot that may have lifted his work then dismissed it as unreliable. For the wider publishing world, the episode lands in familiar territory. The industry is already fighting over who gets to train on whose content, how much human input makes AI-assisted work legally and ethically defensible, and whether machine scoring systems are themselves trustworthy enough to decide what counts as credible journalism.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [2], [6] - Paragraph 2: [1] - Paragraph 3: [4], [5] - Paragraph 4: [1], [2], [6], [7] - Paragraph 5: [1], [2], [3], [6] - Paragraph 6: [1], [4], [5], [7]

Source: Noah Wire Services

Verification / Sources

- https://hughstephensblog.net/2026/04/27/an-ai-bot-rewrote-my-blog-post-and-then-gave-me-a-failing-grade-for-credibility/ - Please view link - unable to able to access data

- https://commons.hostos.cuny.edu/edtech/ai/assessing-ai-generated-content/ - This article discusses the importance of critically evaluating AI-generated content, highlighting common issues such as factual inaccuracies ('hallucinations') and biases. It emphasizes the need for verification through multiple strategies, including cross-referencing with reliable sources and applying critical thinking. The piece underscores the user's responsibility for the accuracy and integrity of information used, regardless of its origin, and advises treating AI outputs as starting points that require thorough validation before acceptance.

- https://palospublishing.com/evaluating-trustworthiness-in-ai-generated-content/ - This piece explores factors to consider when assessing the trustworthiness of AI-generated content, focusing on the source of information. It notes that AI models are trained on vast datasets from various online sources, which may include unreliable or biased content. The article advises cross-checking facts with trusted, verified sources to ensure accuracy, highlighting that the reliability of AI-generated content is contingent upon the quality of its training data.

- https://apnews.com/article/363f1c537eb86b624bf5e81bed70d459 - The article reports on the U.S. Copyright Office's announcement that works created with the assistance of artificial intelligence can be protected by copyright if they contain sufficient human creativity. It clarifies that human creativity is essential for a work to be eligible for protection under copyright law, and that works generated entirely by machines without significant human intervention are not eligible for registration. The decision follows consultations with various stakeholders and addresses the complexities of AI-generated content in the context of copyright.

- https://www.axios.com/2025/02/13/publishers-sue-cohere-ai-copyright - This article details a lawsuit filed by over a dozen major U.S. news organizations against AI company Cohere, alleging unauthorized use of their publications and damage to their trademarks. The lawsuit, initiated by the News Media Alliance, claims that Cohere used content from its members without permission. The publishers seek a permanent injunction to prevent Cohere from using their publications if found liable for copyright and trademark infringement, highlighting the growing concerns over AI's impact on content creators' rights.

- https://www.axios.com/2024/10/07/ai-detection-tools-reliability-labeling - The article discusses the challenges in detecting AI-generated content, noting that as AI models advance, distinguishing between human-created and AI-generated text, images, and videos becomes increasingly difficult. This detection gap poses new vulnerabilities for governments and businesses, exposing them to targeted malicious operations and misinformation. Experts observe that humans can no longer reliably discern synthetic content, and while AI detection tools exist, their effectiveness is limited. The piece also covers efforts by tech companies to label AI-generated content and the push for transparency in synthetic content, especially in political advertising.

- https://apnews.com/article/9643064e847a5e88ef6ee8b620b3a44c - A federal judge in San Francisco approved a preliminary $1.5 billion settlement between AI company Anthropic and a group of authors and publishers, who alleged that nearly 465,000 books had been illegally used to train AI chatbots. The settlement will pay about $3,000 per book covered, though it does not apply to future works. The ruling follows a mixed earlier decision, which found that while AI training itself is not illegal, Anthropic illegally acquired materials. The judge also announced plans to retire at the end of the year.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 8

Notes: The article was published on April 27, 2026, and discusses a recent incident involving AI rewriting a blog post. The earliest known publication date of similar content is April 20, 2026, when the London News article was published. The narrative appears original, with no evidence of recycling or republishing across low-quality sites. The content is based on a personal blog post, which typically warrants a high freshness score. No discrepancies in figures, dates, or quotes were found. The article includes updated data and does not recycle older material. Overall, the freshness score is high.

Quotes check

Score: 7

Notes: The article includes direct quotes from the AI bot's analysis of the blog post. These quotes appear to be original to this article, with no matches found in earlier material. However, the quotes cannot be independently verified, as they originate from the AI bot's internal assessment. The lack of external verification sources raises concerns about the authenticity of the quotes. Therefore, the score is moderate.

Source reliability

Score: 6

Notes: The narrative originates from a personal blog, which is not a major news organisation. While the author, Hugh Stephens, has expertise in international copyright issues, the blog's content is not subject to the same scrutiny as mainstream media. The article references reputable sources but lacks direct links to these sources, raising concerns about transparency and verifiability. The source's reliability is moderate due to these factors.

Plausibility check

Score: 8

Notes: The claims made in the article are plausible and align with known issues regarding AI-generated content and copyright concerns. The narrative is consistent with industry trends and is covered by other reputable outlets. The report includes specific factual anchors, such as dates, institutions, and events. The language and tone are consistent with the region and topic. Overall, the plausibility score is high.

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary: The article presents a plausible and original narrative about an AI bot rewriting a blog post and assessing its credibility. While the freshness and plausibility scores are high, concerns about the verifiability of quotes and the reliability of the source lead to a medium confidence level. The lack of independent verification sources and the use of direct quotes from the AI bot's internal assessment are notable concerns.