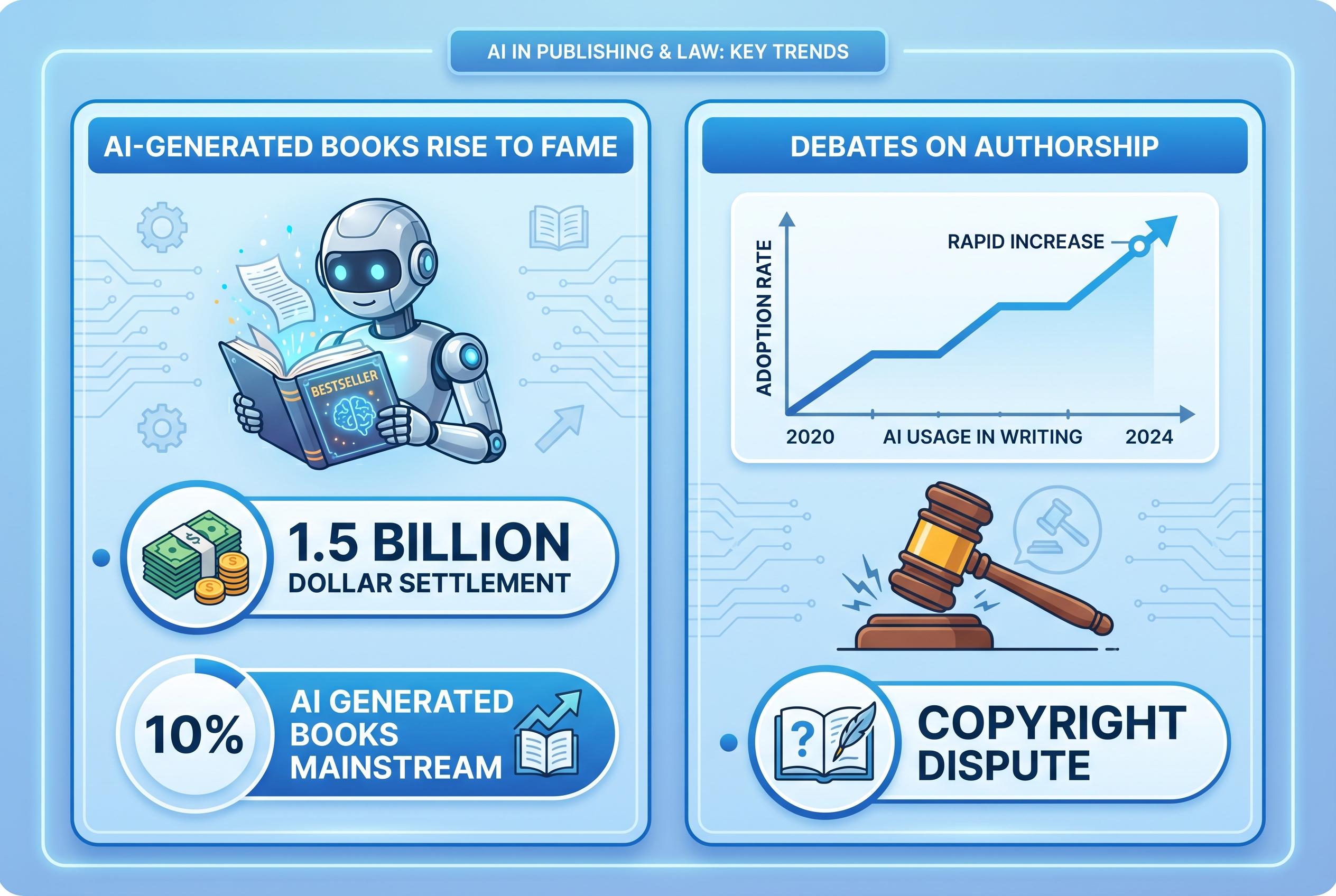

The rise of AI-produced books has transitioned from novelty to a burgeoning commercial sector, prompting urgent debates over authorship rights, licensing, and transparency, highlighted by a landmark $1.5 billion settlement in a key copyright dispute.

AI-generated and AI-polished books have moved from a novelty to a commercial category, with thousands of titles now appearing on the market as publishers, self-publishers and platform operators test how far generative tools can go in accelerating production. The shift is forcing a sharper reckoning over what counts as authorship, who owns training rights, and how much disclosure buyers should be able to expect when text has been produced or heavily shaped by machines.

That debate has been sharpened by the Bartz v. Anthropic settlement, which the official settlement website says could ultimately pay up to $1.5 billion and is designed to resolve claims tied to the use of pirated books in training Anthropic’s models. The settlement materials indicate that eligible works may receive roughly $3,000 each, while the Authors Guild and Penguin Random House have both published guidance to help writers determine whether their books are covered and how to submit claims.

The legal pressure is not confined to training data. Journalists and authors have also raised concerns about tools that can imitate a writer’s style or “voice”, including editorial systems that allegedly borrow heavily from identifiable literary identities. That creates a new operational problem for publishers and developers: they must decide not only whether content is machine-made, but whether it is sufficiently transparent, licensed and traceable to satisfy authors, readers and regulators.

For the wider industry, the message is becoming harder to ignore. As the Associated Press and other outlets have reported, the Anthropic settlement is likely to be read as a landmark moment in U.S. copyright disputes over AI, even as broader questions remain unresolved. Licensing, metadata accuracy and consumer disclosure are now emerging as practical necessities, not optional extras, for anyone building or buying generative publishing tools.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [5], [6], [7] - Paragraph 2: [2], [3], [4] - Paragraph 3: [5], [6] - Paragraph 4: [2], [7]

Source: Noah Wire Services

Verification / Sources

- https://letsdatascience.com/news/ai-tools-produce-thousands-of-commercial-books-b284a47a - Please view link - unable to able to access data

- https://www.anthropiccopyrightsettlement.com/ - The official website for the Bartz v. Anthropic copyright settlement, providing detailed information on the case, settlement terms, and how authors and publishers can participate. The settlement addresses the use of pirated books by Anthropic to train its AI models, with payouts of approximately $3,000 per infringed work. The website offers resources for class members, including claim forms and a searchable database of works included in the settlement.

- https://authorsguild.org/advocacy/artificial-intelligence/anthropic-settlement-faq/ - The Authors Guild provides a comprehensive FAQ on the Bartz v. Anthropic settlement, detailing the background of the case, the settlement's implications for authors, and guidance on how to file claims. The FAQ addresses questions about the settlement process, payment allocations, and the impact of the settlement on future AI training practices.

- https://www.penguinrandomhouse.com/bartz-v-anthropic-copyright-settlement-faq-for-authors/ - Penguin Random House offers a FAQ for authors regarding the Bartz v. Anthropic copyright settlement. The FAQ includes information on how authors can determine if their works are included in the settlement, the claims process, and the differences between legal and beneficial ownership of works. It also provides contact information for further assistance.

- https://www.tomshardware.com/tech-industry/anthropic-to-pay-landmark-settlement-over-claude-training - An article from Tom's Hardware discussing Anthropic's $1.5 billion settlement in response to a class-action lawsuit over the use of pirated books in training its AI models. The article details the allegations, the terms of the settlement, and the broader implications for AI development and copyright law.

- https://www.pcgamer.com/software/ai/anthropic-agrees-to-pay-usd1-5-billion-to-authors-whose-work-trained-ai-in-priciest-copyright-settlement-in-u-s-history/ - PC Gamer reports on Anthropic's agreement to pay $1.5 billion to authors whose works were used to train its AI models, marking the largest copyright settlement in U.S. history. The article provides insights into the lawsuit, the settlement details, and the potential impact on the AI industry and authors' rights.

- https://www.apnews.com/article/aa3df1aafcc95a91c09b2c22bfd49058 - The Associated Press covers the settlement between authors and Anthropic over the use of copyrighted books to train its AI models. The article discusses the terms of the settlement, the legal background, and the broader implications for the publishing industry and AI development.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 7

Notes: The article was published on April 15, 2026. The Bartz v. Anthropic settlement was announced on September 5, 2025, with the fairness hearing scheduled for May 14, 2026. (authorsalliance.org) The Associated Press reported on the settlement on September 5, 2025. (apnews.com) The article references these events, indicating that the content is relatively fresh. However, the article does not provide specific publication dates for the other sources it cites, making it difficult to assess the freshness of all referenced information. Additionally, the article mentions that AI-generated books are now being sold at scale, with thousands of titles on the market, but does not provide specific data or sources to support this claim. This lack of specific data raises concerns about the originality and freshness of the content.

Quotes check

Score: 6

Notes: The article includes direct quotes from various sources, such as the Yale University Press statement on the Bartz v. Anthropic settlement. (yalebooks.yale.edu) However, the article does not provide specific publication dates for these quotes, making it challenging to verify their originality and freshness. Additionally, the article does not provide direct links to the original sources of these quotes, which raises concerns about the transparency and verifiability of the information.

Source reliability

Score: 5

Notes: The article cites sources like Yale University Press and the Authors Guild, which are reputable within their respective fields. (yalebooks.yale.edu) However, the article also references Let's Data Science, a niche publication with limited reach and potential biases. The lack of direct links to the original sources and the reliance on a single, potentially biased source for the majority of the content raises concerns about the independence and reliability of the information presented.

Plausibility check

Score: 6

Notes: The article discusses the emergence of AI-generated books and the legal implications of using copyrighted material for AI training, referencing the Bartz v. Anthropic settlement. (yalebooks.yale.edu) While these developments are plausible and align with industry trends, the article lacks specific data or examples to substantiate the claims about the scale of AI-generated books entering the market. The absence of concrete evidence makes it difficult to fully assess the plausibility of the article's claims.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary: The article presents information on the emergence of AI-generated books and the legal implications of using copyrighted material for AI training, referencing the Bartz v. Anthropic settlement. (yalebooks.yale.edu) However, the article lacks specific data and direct links to original sources, making it difficult to fully verify the claims made. The heavy reliance on a single, potentially biased source raises concerns about the independence and reliability of the information presented.