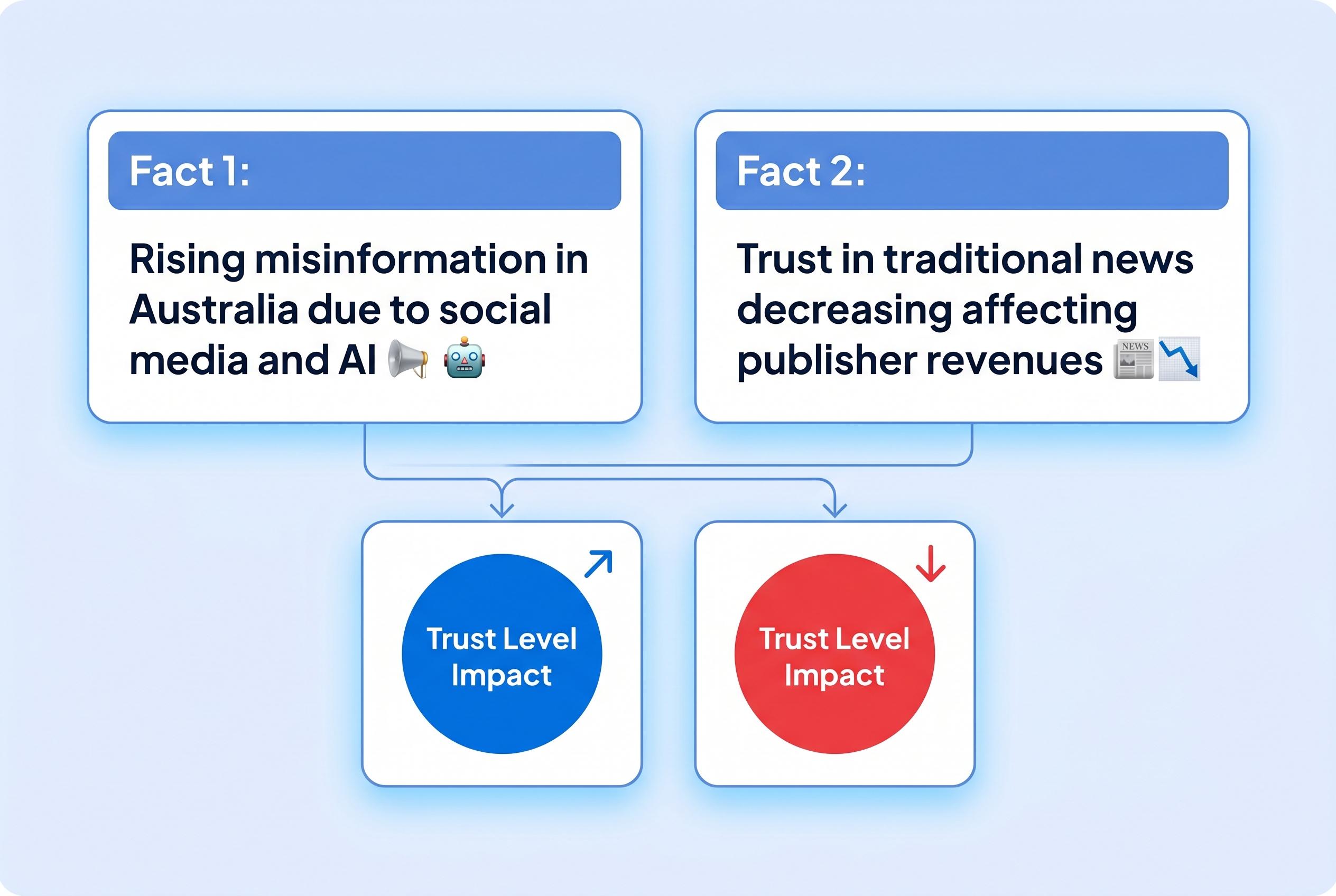

Australians face increasing exposure to low-quality content and AI-generated misinformation, eroding trust in traditional news and impacting publisher revenues, as calls grow for transparency and media literacy improvements.

Australians are being pushed further into a fragmented information landscape as social media feeds, influencers and generative AI tools increasingly compete with traditional news. The concern, according to a new Conversation article, is not simply that people are seeing more low-quality material, but that opaque ranking systems and AI-generated summaries are reshaping what audiences encounter before they ever reach a newsroom’s own reporting.

That warning is reinforced by recent evidence from the Australian Communications and Media Authority, which found that 72% of Australian adults using digital platforms in the first half of 2025 came across misinformation online. The regulator also reported a rise in content labelled as created by artificial intelligence, underlining how quickly machine-generated material is becoming part of the problem. Separate research from Queensland University of Technology found that Australians meet misleading information in everyday browsing, not just in politics or health, and that mainstream outlets are often perceived as part of the misinformation problem, a pattern that appears to be corroding trust in credible news.

The broader economic threat is also intensifying. A study on large language models and online news consumption found a continuing decline in traffic to publishers from August 2024 onwards, with blocking generative AI bots linked to lower website visits and reduced consumer traffic. That matters because zero-click search results and AI summaries can satisfy users without sending them to the original source, weakening the audience and revenue base that supports reporting in the first place.

Against that backdrop, the roundtable described in The Conversation report calls for tougher transparency rules for platforms, clearer labelling of AI-generated material, fairer compensation for news used in AI systems and much stronger media literacy efforts. The article argues that without those changes, Australia risks letting invisible algorithms and low-trust content further hollow out the public-interest journalism that underpins democratic debate.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph: - Paragraph 1: [2], [1] - Paragraph 2: [2], [3] - Paragraph 3: [7], [1] - Paragraph 4: [1], [2], [3], [7]

Source: Noah Wire Services

Verification / Sources

- https://phys.org/news/2026-04-ai-online-digital-platforms.html - Please view link - unable to able to access data

- https://www.acma.gov.au/articles/2025-11/majority-australians-encountering-misinformation-online - A report by the Australian Communications and Media Authority (ACMA) reveals that 72% of Australian adults using digital platforms in the first half of 2025 encountered some form of misinformation. The study highlights that 64% of Facebook users experienced misinformation, with false or misleading information about certain social groups being the most common type. The report also notes an increase in content labeled as 'Created by artificial intelligence' compared to the previous year, indicating a growing concern over AI-generated misinformation.

- https://phys.org/news/2026-03-australians-misinformation-online-daily-reveals.html - Research from Queensland University of Technology (QUT) indicates that Australians frequently encounter misinformation in their daily online activities, extending beyond politics and health topics. The study found that participants often identified mainstream news outlets as sources of misleading content, challenging the assumption that misinformation is confined to fringe platforms or extreme topics. This widespread exposure contributes to the erosion of trust and disengagement from credible news sources.

- https://www.nationaltribune.com.au/australians-face-misinformation-online-daily-qut-study/ - A QUT study published in 'Information, Communication & Society' reveals that Australians routinely encounter misinformation online, not just in politics or health, but also in areas like business, celebrity news, and entertainment. The research highlights that mainstream news outlets are often identified as sources of misleading material, leading to a decline in trust and engagement with credible news sources.

- https://www.miragenews.com/most-australians-face-online-misinformation-1576133/ - An ACMA report shows that 72% of Australian adults using digital platforms in the first half of 2025 encountered some form of misinformation. The study indicates that 64% of Facebook users experienced misinformation, with false or misleading information about certain social groups being the most common type. The report also notes an increase in content labeled as 'Created by artificial intelligence' compared to the previous year, highlighting concerns over AI-generated misinformation.

- https://www.miragenews.com/australians-face-misinformation-online-daily-1629810/ - A QUT study published in 'Information, Communication & Society' reveals that Australians frequently encounter misinformation online, extending beyond politics and health topics to areas like business, celebrity news, and entertainment. The research found that mainstream news outlets are often identified as sources of misleading content, contributing to the erosion of trust and disengagement from credible news sources.

- https://arxiv.org/abs/2512.24968 - A study titled 'The Impact of LLMs on Online News Consumption and Production' examines how large language models (LLMs) affect news publishers. The research documents a consistent decline in traffic to news publishers since August 2024 and finds that blocking GenAI bots can reduce website traffic by 23% and real consumer traffic by 14%. The study also notes that LLMs have not yet replaced editorial or content-production jobs but have led to increased use of rich content and advertising technologies.

Noah Fact Check Pro

The draft above was created using the information available at the time the story first emerged. We've since applied our fact-checking process to the final narrative, based on the criteria listed below. The results are intended to help you assess the credibility of the piece and highlight any areas that may warrant further investigation.

Freshness check

Score: 8

Notes: The article was published on April 29, 2026, making it current. However, the content references research from November 2025 and March 2026, which may affect the perceived freshness of the information. (acma.gov.au)

Quotes check

Score: 7

Notes: The article includes direct quotes from experts and researchers. However, without access to the original sources, it's challenging to verify the accuracy and context of these quotes. (phys.org)

Source reliability

Score: 6

Notes: The article is published on Phys.org, which is a reputable science news website. However, the content is based on a report from The Conversation, an independent news and analysis website. The Conversation's content is often republished on Phys.org, which may raise concerns about originality and source independence. (phys.org)

Plausibility check

Score: 7

Notes: The claims about Australians encountering misinformation online and the role of AI in this process are plausible and supported by previous research. However, the article's reliance on a single source for these claims reduces the ability to cross-verify the information. (phys.org)

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary: The article presents current information on Australians encountering misinformation online and the role of AI in this process. However, it heavily relies on a single source, The Conversation, which raises concerns about source independence and verification. The inclusion of quotes without accessible original sources further complicates verification. Given these issues, the content cannot be fully verified, leading to a FAIL verdict with MEDIUM confidence.